There’s a pattern in the history of technology that we usually recognize only in hindsight.

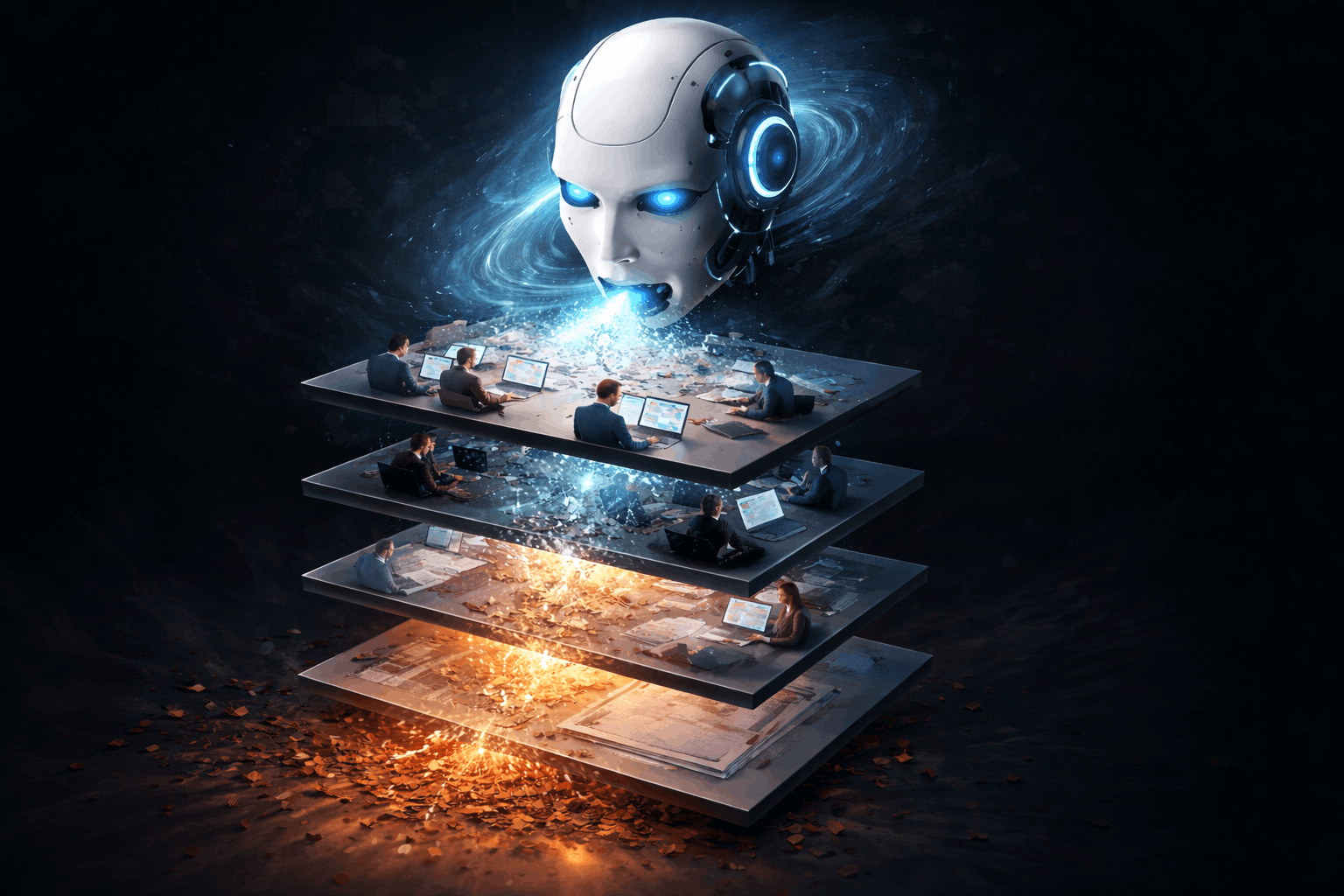

New tools don’t just make work faster. They eat layers. Not industries. Not entire professions overnight. Layers inside them.

AI is the first technology that’s eating many layers at once, across almost everything humans do on a computer. That’s why this moment feels different. And unsettling.

The past, when tools ate one layer at a time

Historically, technology moved upward slowly. It removed a layer, then paused. Humans adapted in the gap.

Before calculators, “computer” was a job title. Humans did arithmetic for a living. Calculators didn’t kill mathematics. They reduced the need for human calculators. Mathematics moved upward, from computation to proof, abstraction, and theory. One layer disappeared, the rest remained.

Architects once drew every line by hand. AutoCAD removed manual drafting, geometric repetition, and physical precision work. But architecture still required judgment, safety intuition, tradeoffs, and responsibility for real buildings. One layer gone.

Photography once involved darkrooms, chemicals, and physical manipulation. Photoshop removed that layer. It did not remove taste, framing, storytelling, or intent.

Excel removed handwritten ledgers and manual reconciliation. Accounting still required interpretation, compliance, ethics, and trust.

Layer by layer, slowly. This is how humans stayed comfortable. There was time to re-anchor identity.

The present, AI eats stacks, not layers

AI breaks this pattern.

Instead of removing one layer and stopping, AI keeps climbing up the abstraction stack. In one leap, it can calculate, design, code, write, compose, generate images and video, model systems, and explain its own output.

And it does this across domains, not one profession at a time.

This isn’t “AI replaces programmers” or “AI replaces designers”.

It’s this: AI removes entire intermediate layers of human work wherever tasks are digital, repeatable, and internally judgeable.

That’s why the anxiety feels universal.

What AI consistently eats

Across industries, AI eats work with common traits. The input can be described in language. The output can be evaluated without touching reality. The feedback loop is fast. The cost of being wrong is tolerable. The domain is already digitized.

That’s why AI replaces calculators, drafting, first pass design, boilerplate coding, rough analysis, and initial creative output. This is obviously moving towards AI pushing out production ready output across industries and roles.

Not because it understands. Because the loop is closed.

Why this feels unprecedented

Earlier tools stopped at execution. AI doesn’t.

For the first time, humans are watching a tool climb through execution, synthesis, pattern recognition, and partial judgment all at once.

So the old comfort story, “this has happened before”, only works if we’re honest. The speed and breadth are new. And the fear is rational.

The future, fewer layers, heavier humans

AI doesn’t remove humans from systems. It concentrates them.

Fewer people will define objectives, approve outputs, design systems, absorb risk, and own consequences. But those people will carry more weight.

The middle layers, the buffers that once absorbed ambiguity, will thin out.

Some things still resist automation:

- Ground truth, what’s actually happening in the real world

- Accountability, who is responsible when things go wrong

- Values, what should or should not exist

- Power and incentives, who benefits and who pays the cost

- Meaning, why something exists at all

AI can simulate conversations about these. It cannot own them.

Reality always snaps responsibility back to a human.

So what should engineers, designers, sales teams and other knowledge workers do now?

Not learn prompts. Not outwork AI. And definitely not panic pivot.

The answer is quieter and harder.

Stop optimizing for tools and start optimizing for judgment. Tools are now ephemeral. Languages, frameworks, design software, even models will keep changing faster than humans can specialize. What compounds instead is judgment, taste, intuition about tradeoffs, and the ability to say “this is wrong” even when it looks correct.

Move closer to reality, not further into abstraction. AI thrives in abstraction. Humans retain leverage near users, constraints, consequences, and messy reality. Engineers talking to users. Designers understanding user incentives. Creators caring about outcomes, not artifacts. Distance from reality is where layers disappear fastest.

Shift identity from tasks to outcomes. The old identities were “I write code”, “I design screens”, “I produce assets”. The new identity is “I own whether this works”. Ownership includes defining scope, handling failure, explaining tradeoffs, and absorbing responsibility. AI can help execute. It cannot carry blame.

Become fluent across layers, not perfect in one. The future rewards people who can understand systems end to end, translate between technical, human, and business concerns, notice second order effects, and recognize when something is technically correct but practically wrong. This isn’t about becoming a shallow generalist. It’s about being able to hold the whole when layers collapse.

Let go of effort as proof of worth. For a long time, struggle was how many of us proved intelligence and legitimacy. AI removes visible struggle. That can feel like asking whether we were ever valuable. The answer is yes, but not because of the effort. Value was never in the suffering. It was in the judgment earned through it.

Redefine success as calm competence in uncertainty. The future isn’t about always being ahead. It’s about staying oriented as tools change, remaining useful as roles blur, and not freezing when certainty disappears. People who stay calm, curious, and responsible in ambiguity will quietly become indispensable.

A personal, honest note

This transition will hurt some people. Some roles will shrink. Some paths will close. That doesn’t make the fear irrational.

But history suggests something else too. When layers disappear, what remains is the human capacity to judge, decide, and take responsibility.

AI isn’t ending human work. It’s ending the idea that doing one narrow thing well is enough.

What’s left is heavier. But it’s also more deeply human.